A brand new open supply AI picture generator able to producing real looking photos from any textual content immediate has seen stunningly swift uptake in its first week. Stability AI’s Secure Diffusion, excessive constancy however able to being run on off-the-shelf client {hardware}, is now in use by artwork generator companies like Artbreeder, Pixelz.ai and extra. However the mannequin’s unfiltered nature means not all of the use has been fully above board.

For essentially the most half, the use instances have been above board. For instance, NovelAI has been experimenting with Secure Diffusion to supply artwork that may accompany the AI-generated tales created by customers on its platform. Midjourney has launched a beta that faucets Secure Diffusion for larger photorealism.

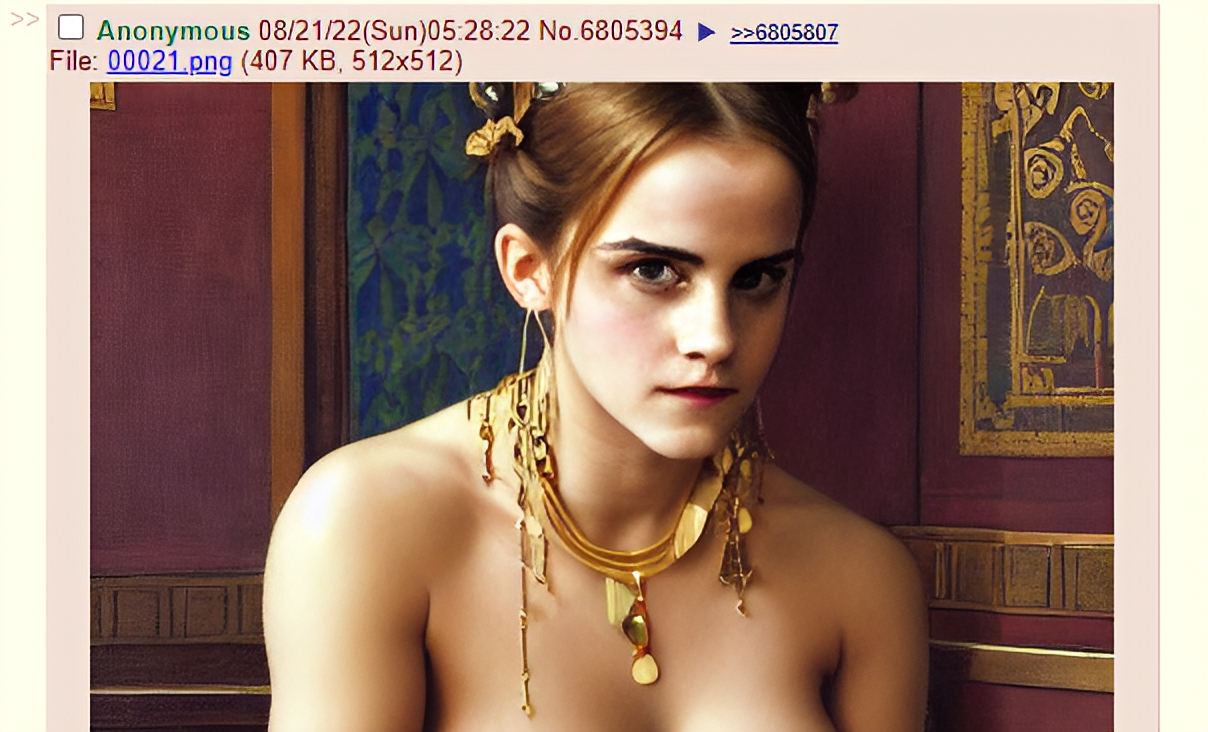

However Secure Diffusion has additionally been used for much less savory functions. On the notorious dialogue board 4chan, the place the mannequin leaked early, a number of threads are devoted to AI-generated artwork of nude celebrities and different types of generated pornography.

Emad Mostaque, the CEO of Stability AI, known as it “unlucky” that the mannequin leaked on 4chan and harassed that the corporate was working with “main ethicists and applied sciences” on security and different mechanisms round accountable launch. One in all these mechanisms is an adjustable AI software, Security Classifier, included within the general Secure Diffusion software program package deal that makes an attempt to detect and block offensive or undesirable photographs.

Nevertheless, Security Classifier — whereas on by default — may be disabled.

Secure Diffusion could be very a lot new territory. Different AI art-generating techniques, like OpenAI’s DALL-E 2, have applied strict filters for pornographic materials. (The license for the open supply Secure Diffusion prohibits sure functions, like exploiting minors, however the mannequin itself isn’t fettered on the technical degree.) Furthermore, many don’t have the power to create artwork of public figures, not like Secure Diffusion. These two capabilities might be dangerous when mixed, permitting dangerous actors to create pornographic “deepfakes” that — worst-case state of affairs — would possibly perpetuate abuse or implicate somebody in against the law they didn’t commit.

A deepfake of Emma Watson, created by Secure Diffusion and printed on 4chan.

Girls, sadly, are most definitely by far to be the victims of this. A research carried out in 2019 revealed that, of the 90% to 95% of deepfakes which are non-consensual, about 90% are of girls. That bodes poorly for the way forward for these AI techniques, in keeping with Ravit Dotan, an AI ethicist on the College of California, Berkeley.

“I fear about different results of artificial photographs of unlawful content material — that it’s going to exacerbate the unlawful behaviors which are portrayed,” Dotan instructed TechCrunch by way of e mail. “E.g., will artificial baby [exploitation] improve the creation of genuine baby [exploitation]? Will it improve the variety of pedophiles’ assaults?”

Montreal AI Ethics Institute principal researcher Abhishek Gupta shares this view. “We actually want to consider the lifecycle of the AI system which incorporates post-deployment use and monitoring, and take into consideration how we will envision controls that may decrease harms even in worst-case eventualities,” he mentioned. “That is significantly true when a robust functionality [like Stable Diffusion] will get into the wild that may trigger actual trauma to these towards whom such a system is likely to be used, for instance, by creating objectionable content material within the sufferer’s likeness.”

One thing of a preview performed out over the previous yr when, on the recommendation of a nurse, a father took photos of his younger baby’s swollen genital space and texted them to the nurse’s iPhone. The picture routinely backed as much as Google Images and was flagged by the corporate’s AI filters as baby sexual abuse materials, which resulted within the man’s account being disabled and an investigation by the San Francisco Police Division.

If a respectable picture may journey such a detection system, specialists like Dotan say, there’s no purpose deepfakes generated by a system like Secure Diffusion couldn’t — and at scale.

“The AI techniques that individuals create, even after they have one of the best intentions, can be utilized in dangerous ways in which they don’t anticipate and might’t stop,” Dotan mentioned. “I feel that builders and researchers usually underappreciated this level.”

After all, the know-how to create deepfakes has existed for a while, AI-powered or in any other case. A 2020 report from deepfake detection firm Sensity discovered that a whole lot of express deepfake movies that includes feminine celebrities had been being uploaded to the world’s greatest pornography web sites each month; the report estimated the full variety of deepfakes on-line at round 49,000, over 95% of which had been porn. Actresses together with Emma Watson, Natalie Portman, Billie Eilish and Taylor Swift have been the targets of deepfakes since AI-powered face-swapping instruments entered the mainstream a number of years in the past, and a few, together with Kristen Bell, have spoken out towards what they view as sexual exploitation.

However Secure Diffusion represents a more moderen technology of techniques that may create extremely — if not completely — convincing pretend photographs with minimal work by the consumer. It’s additionally simple to put in, requiring no quite a lot of setup recordsdata and a graphics card costing a number of hundred {dollars} on the excessive finish. Work is underway on much more environment friendly variations of the system that may run on an M1 MacBook.

A Kylie Kardashian deepfake posted to 4chan.

Sebastian Berns, a Ph.D. researcher within the AI group at Queen Mary College of London, thinks the automation and the likelihood to scale up custom-made picture technology are the massive variations with techniques like Secure Diffusion — and fundamental issues. “Most dangerous imagery can already be produced with standard strategies however is guide and requires a variety of effort,” he mentioned. “A mannequin that may produce near-photorealistic footage could give method to customized blackmail assaults on people.”

Berns fears that non-public photographs scraped from social media might be used to situation Secure Diffusion or any such mannequin to generate focused pornographic imagery or photographs depicting unlawful acts. There’s actually precedent. After reporting on the rape of an eight-year-old Kashmiri lady in 2018, Indian investigative journalist Rana Ayyub grew to become the goal of Indian nationalist trolls, a few of whom created deepfake porn along with her face on one other individual’s physique. The deepfake was shared by the chief of the nationalist political celebration BJP, and the harassment Ayyub acquired consequently grew to become so dangerous the United Nations needed to intervene.

“Secure Diffusion gives sufficient customization to ship out automated threats towards people to both pay or danger having pretend however doubtlessly damaging footage being printed,” Berns continued. “We already see folks being extorted after their webcam was accessed remotely. That infiltration step may not be essential anymore.”

With Secure Diffusion out within the wild and already getting used to generate pornography — some non-consensual — it would change into incumbent on picture hosts to take motion. TechCrunch reached out to one of many main grownup content material platforms, OnlyFans, however didn’t hear again as of publication time. A spokesperson for Patreon, which additionally permits grownup content material, famous that the corporate has a coverage towards deepfakes and disallows photographs that “repurpose celebrities’ likenesses and place non-adult content material into an grownup context.”

If historical past is any indication, nevertheless, enforcement will probably be uneven — partly as a result of few legal guidelines particularly defend towards deepfaking because it pertains to pornography. And even when the specter of authorized motion pulls some websites devoted to objectionable AI-generated content material beneath, there’s nothing to stop new ones from popping up.

In different phrases, Gupta says, it’s a courageous new world.

“Inventive and malicious customers can abuse the capabilities [of Stable Diffusion] to generate subjectively objectionable content material at scale, utilizing minimal assets to run inference — which is cheaper than coaching all the mannequin — after which publish them in venues like Reddit and 4chan to drive site visitors and hack consideration,” Gupta mentioned. “There’s a lot at stake when such capabilities escape out “into the wild” the place controls comparable to API charge limits, security controls on the sorts of outputs returned from the system are not relevant.”