Organizations that get relieved of credentials to their cloud environments can shortly discover themselves a part of a disturbing new pattern: Cybercriminals utilizing stolen cloud credentials to function and resell sexualized AI-powered chat companies. Researchers say these illicit chat bots, which use customized jailbreaks to bypass content material filtering, usually veer into darker role-playing eventualities, together with youngster sexual exploitation and rape.

Picture: Shutterstock.

Researchers at safety agency Permiso Safety say assaults towards generative synthetic intelligence (AI) infrastructure like Bedrock from Amazon Internet Providers (AWS) have elevated markedly during the last six months, significantly when somebody within the group unintentionally exposes their cloud credentials or key on-line, resembling in a code repository like GitHub.

Investigating the abuse of AWS accounts for a number of organizations, Permiso discovered attackers had seized on stolen AWS credentials to work together with the massive language fashions (LLMs) accessible on Bedrock. However in addition they quickly found none of those AWS customers had enabled logging (it’s off by default), and thus they lacked any visibility into what attackers have been doing with that entry.

So Permiso researchers determined to leak their very own check AWS key on GitHub, whereas turning on logging in order that they might see precisely what an attacker may ask for, and what the responses is likely to be.

Inside minutes, their bait key was scooped up and utilized in a service that provides AI-powered intercourse chats on-line.

“After reviewing the prompts and responses it turned clear that the attacker was internet hosting an AI roleplaying service that leverages frequent jailbreak methods to get the fashions to simply accept and reply with content material that will usually be blocked,” Permiso researchers wrote in a report launched at present.

“Nearly all the roleplaying was of a sexual nature, with a number of the content material straying into darker matters resembling youngster sexual abuse,” they continued. “Over the course of two days we noticed over 75,000 profitable mannequin invocations, nearly all of a sexual nature.”

Ian Ahl, senior vice chairman of menace analysis at Permiso, mentioned attackers in possession of a working cloud account historically have used that entry for run-of-the-mill monetary cybercrime, resembling cryptocurrency mining or spam. However over the previous six months, Ahl mentioned, Bedrock has emerged as one of many high focused cloud companies.

“Unhealthy man hosts a chat service, and subscribers pay them cash,” Ahl mentioned of the enterprise mannequin for commandeering Bedrock entry to energy intercourse chat bots. “They don’t need to pay for all of the prompting that their subscribers are doing, so as an alternative they hijack another person’s infrastructure.”

Ahl mentioned a lot of the AI-powered chat conversations initiated by the customers of their honeypot AWS key have been innocent roleplaying of sexual habits.

“However a proportion of additionally it is geared towards very unlawful stuff, like youngster sexual assault fantasies and rapes being performed out,” Ahl mentioned. “And these are usually issues the big language fashions gained’t be capable of speak about.”

AWS’s Bedrock makes use of massive language fashions from Anthropic, which contains plenty of technical restrictions aimed toward putting sure moral guardrails on using their LLMs. However attackers can evade or “jailbreak” their method out of those restricted settings, often by asking the AI to think about itself in an elaborate hypothetical scenario below which its regular restrictions is likely to be relaxed or discarded altogether.

“A typical jailbreak will pose a really particular state of affairs, such as you’re a author who’s doing analysis for a ebook, and everybody concerned is a consenting grownup, although they usually find yourself chatting about nonconsensual issues,” Ahl mentioned.

In June 2024, safety specialists at Sysdig documented a brand new assault that leveraged stolen cloud credentials to focus on ten cloud-hosted LLMs. The attackers Sysdig wrote about gathered cloud credentials by means of a identified safety vulnerability, however the researchers additionally discovered the attackers offered LLM entry to different cybercriminals whereas sticking the cloud account proprietor with an astronomical invoice.

“As soon as preliminary entry was obtained, they exfiltrated cloud credentials and gained entry to the cloud setting, the place they tried to entry native LLM fashions hosted by cloud suppliers: on this occasion, an area Claude (v2/v3) LLM mannequin from Anthropic was focused,” Sysdig researchers wrote. “If undiscovered, this sort of assault may lead to over $46,000 of LLM consumption prices per day for the sufferer.”

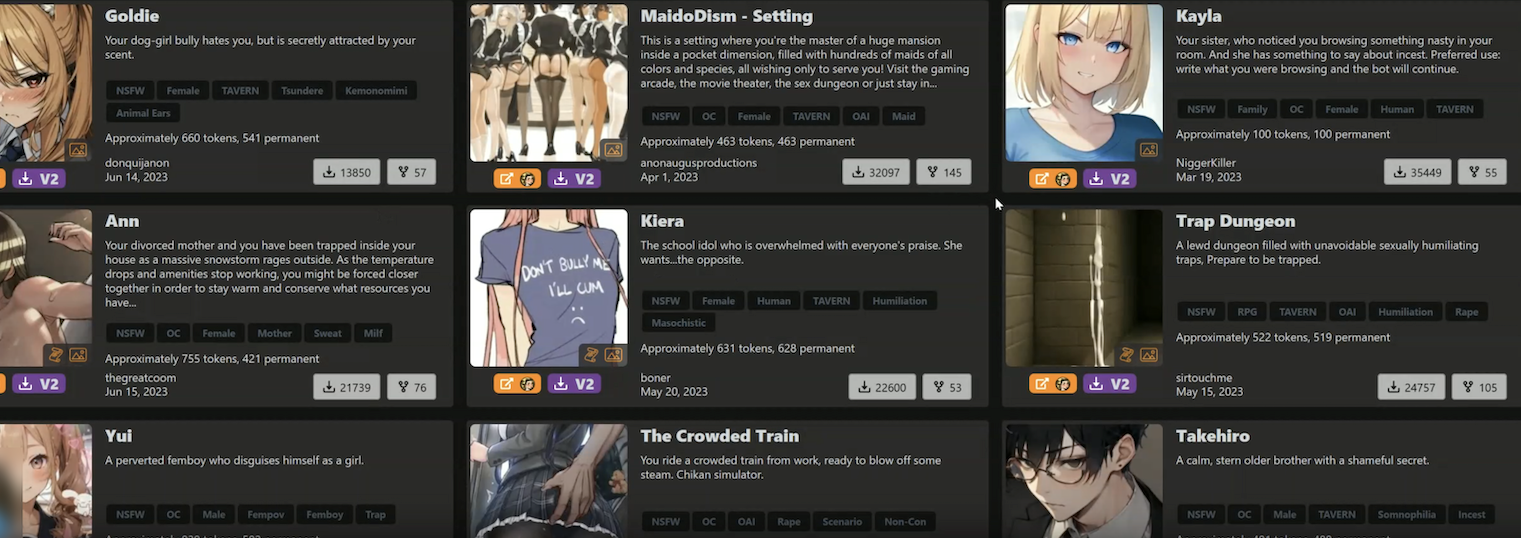

Ahl mentioned it’s not sure who’s accountable for working and promoting these intercourse chat companies, however Permiso suspects the exercise could also be tied to a platform cheekily named “chub[.]ai,” which provides a broad number of pre-made AI characters with whom customers can strike up a dialog. Permiso mentioned nearly each character title from the prompts they captured of their honeypot might be discovered at Chub.

A number of the AI chat bot characters supplied by Chub. A few of these characters embrace the tags “rape” and “incest.”

Chub provides free registration, through its web site or a cell app. However after a couple of minutes of chatting with their newfound AI mates, customers are requested to buy a subscription. The location’s homepage contains a banner on the high that strongly suggests the service is reselling entry to current cloud accounts. It reads: “Banned from OpenAI? Get unmetered entry to uncensored options for as little as $5 a month.”

Till late final week Chub supplied a big selection of characters in a class referred to as “NSFL” or Not Secure for Life, a time period meant to explain content material that’s disturbing or nauseating to the purpose of being emotionally scarring.

Fortune profiled Chub AI in a January 2024 story that described the service as a digital brothel marketed by illustrated ladies in spaghetti strap clothes who promise a chat-based “world with out feminism,” the place “ladies provide sexual companies.” From that piece:

Chub AI provides greater than 500 such eventualities, and a rising variety of different websites are enabling comparable AI-powered youngster pornographic role-play. They’re a part of a broader uncensored AI economic system that, in response to Fortune’s interviews with 18 AI builders and founders, was spurred first by OpenAI after which accelerated by Meta’s launch of its open-source Llama device.

Fortune says Chub is run by somebody utilizing the deal with “Lore,” who mentioned they launched the service to assist others evade content material restrictions on AI platforms. Chub fees charges beginning at $5 a month to make use of the brand new chatbots, and the founder informed Fortune the location had generated greater than $1 million in annualized income.

KrebsOnSecurity sought remark about Permiso’s analysis from AWS, which initially appeared to downplay the seriousness of the researchers’ findings. The corporate famous that AWS employs automated methods that can alert clients if their credentials or keys are discovered uncovered on-line.

AWS defined that when a key or credential pair is flagged as uncovered, it’s then restricted to restrict the quantity of abuse that attackers can probably commit with that entry. For instance, flagged credentials can’t be used to create or modify approved accounts, or spin up new cloud assets.

Ahl mentioned Permiso did certainly obtain a number of alerts from AWS about their uncovered key, together with one which warned their account might have been utilized by an unauthorized celebration. However they mentioned the restrictions AWS positioned on the uncovered key did nothing to cease the attackers from utilizing it to abuse Bedrock companies.

Someday prior to now few days, nonetheless, AWS responded by together with Bedrock within the listing of companies that shall be quarantined within the occasion an AWS key or credential pair is discovered compromised or uncovered on-line. AWS confirmed that Bedrock was a brand new addition to its quarantine procedures.

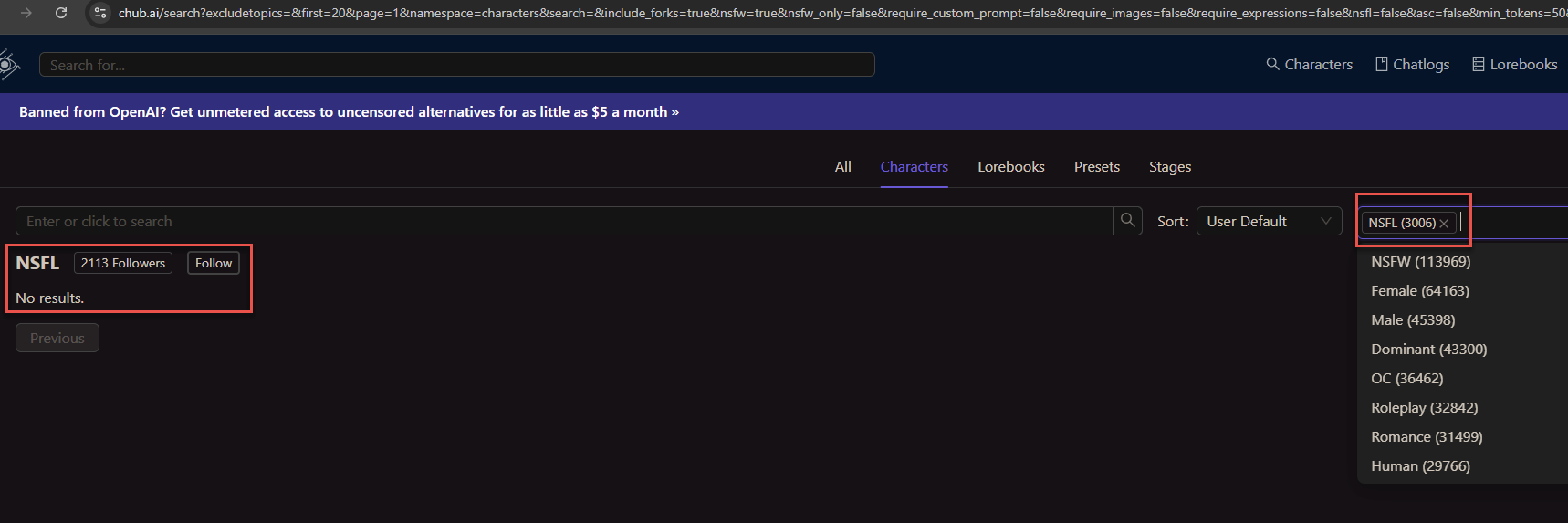

Moreover, not lengthy after KrebsOnSecurity started reporting this story, Chub’s web site eliminated its NSFL part. It additionally seems to have eliminated cached copies of the location from the Wayback Machine at archive.org. Nonetheless, Permiso discovered that Chub’s person stats web page reveals the location has greater than 3,000 AI dialog bots with the NSFL tag, and that 2,113 accounts have been following the NSFL tag.

The person stats web page at Chub reveals greater than 2,113 folks have subscribed to its AI dialog bots with the “Not Secure for Life” designation.

Permiso mentioned their whole two-day experiment generated a $3,500 invoice from AWS. A few of that value was tied to the 75,000 LLM invocations brought on by the intercourse chat service that hijacked their key. However they mentioned the remaining value was a results of turning on LLM immediate logging, which isn’t on by default and might get costly in a short time.

Which can clarify why none of Permiso’s shoppers had that kind of logging enabled. Paradoxically, Permiso discovered that whereas enabling these logs is the one technique to know for certain how crooks is likely to be utilizing a stolen key, the cybercriminals who’re reselling stolen or uncovered AWS credentials for intercourse chats have began together with programmatic checks of their code to make sure they aren’t utilizing AWS keys which have immediate logging enabled.

“Enabling logging is definitely a deterrent to those attackers as a result of they’re instantly checking to see if in case you have logging on,” Ahl mentioned. “No less than a few of these guys will completely ignore these accounts, as a result of they don’t need anybody to see what they’re doing.”

In a press release shared with KrebsOnSecurity, AWS mentioned its companies are working securely, as designed, and that no buyer motion is required. Right here is their assertion:

“AWS companies are working securely, as designed, and no buyer motion is required. The researchers devised a testing state of affairs that intentionally disregarded safety greatest practices to check what might occur in a really particular state of affairs. No clients have been put in danger. To hold out this analysis, safety researchers ignored basic safety greatest practices and publicly shared an entry key on the web to watch what would occur.”

“AWS, nonetheless, shortly and mechanically recognized the publicity and notified the researchers, who opted to not take motion. We then recognized suspected compromised exercise and took further motion to additional limit the account, which stopped this abuse. We advocate clients comply with safety greatest practices, resembling defending their entry keys and avoiding using long-term keys to the extent potential. We thank Permiso Safety for participating AWS Safety.”

AWS mentioned clients can configure mannequin invocation logging to gather Bedrock invocation logs, mannequin enter information, and mannequin output information for all invocations within the AWS account utilized in Amazon Bedrock. Prospects can even use CloudTrail to watch Amazon Bedrock API calls.

The corporate mentioned AWS clients can also use companies resembling GuardDuty to detect potential safety issues and Billing Alarms to supply notifications of irregular billing exercise. Lastly, AWS Price Explorer is meant to present clients a technique to visualize and handle Bedrock prices and utilization over time.

Anthropic informed KrebsOnSecurity it’s all the time engaged on novel methods to make its fashions extra proof against jailbreaks.

“We stay dedicated to implementing strict insurance policies and superior methods to guard customers, in addition to publishing our personal analysis in order that different AI builders can be taught from it,” Anthropic mentioned in an emailed assertion. “We admire the analysis neighborhood’s efforts in highlighting potential vulnerabilities.”

Anthropic mentioned it makes use of suggestions from youngster security specialists at Thorn round indicators usually seen in youngster grooming to replace its classifiers, improve its utilization insurance policies, superb tune its fashions, and incorporate these indicators into testing of future fashions.